ReliaQuest is proud to announce the publication of our AI-Powered Cybercrime, which provides a detailed overview of the rapidly evolving landscape of artificial intelligence (AI) and the misuse of Large Language Models (LLMs) by cybercriminals. This report emphasizes the need for defenders to develop a proactive and informed cybersecurity strategy that includes using AI to mitigate adversaries’ use of this same technology. In the report, we suggest customized, actionable advice and best practices to help organizations mitigate the risks associated with AI-driven cyber threats. By gaining a thorough understanding of the current capabilities and limitations of AI in cybercrime, stakeholders can enhance their defenses against advanced adversaries and secure their digital assets.

The report begins by summarizing how threat actors discuss AI on cybercriminal forums, sharing tips, tricks, and methods such as “Do Anything Now” (aka “DAN”) prompts for manipulating AI for malicious purposes in dedicated forum sections. In this blog, we’ll summarize the report’s remaining focus: three ways in which cybercriminals currently implement AI into their operations and how organizations can mitigate these threats.

AI-Enhanced Phishing

ReliaQuest identified that “phishing via a malicious link” was the leading initial access technique in Q1 2024, involved in 27.2% of critical security incidents we investigated for our customers. Threat actors are leveraging AI to enhance their phishing campaigns, using malicious LLM models to generate convincing phishing emails (sometimes in languages they don’t speak) and to troubleshoot campaign efficacy. In our own experiment, we found that even a basic AI-enhanced phishing email, generated in a very short timeframe, still yielded measurable outcomes, demonstrating the potential for even inexperienced threat actors to execute effective phishing campaigns with minimal effort.

To test how easily AI can spin up a phishing email, ReliaQuest conducted a phishing campaign utilizing ChaosGPT to generate a message against approximately 1,000 individuals. Multiple users clicked the phishing link, and some even supplied their credentials, despite the minimal effort that went into creating the campaign.

Users across multiple cybercriminal forums frequently discuss ways to create tailored phishing pages to conduct their attacks, supplementing an existing market selling pre-made phishing pages to attackers.

LLMs allow threat actors to craft emails with no spelling or grammatical errors in various languages, allowing them to scale their operations.

AI does not introduce new phishing techniques but allows cybercriminals to bolster, quicken, and scale their capabilities during phishing campaigns.

Deepfakes in Social Engineering

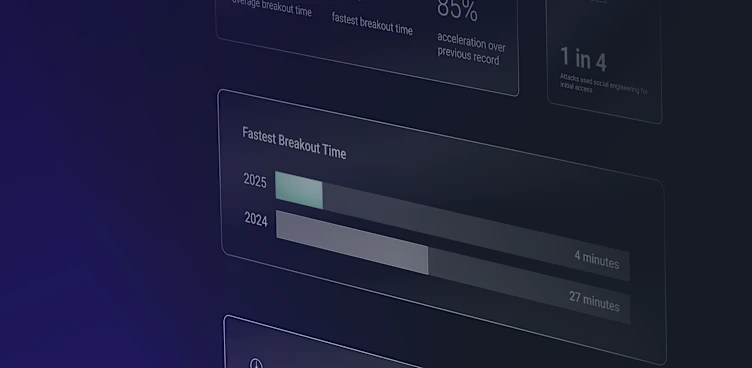

The threat of deepfakes—artificially created or enhanced audio or video—is increasing. Our analysis revealed that the Black Basta ransomware group has used AI to generate deepfakes to enhance mass email spam and voice phishing (vishing) campaigns, while the FBI warned of increasing threats from AI-powered voice and video cloning, which can convincingly impersonate trusted individuals. Deepfakes, easily created with AI tools discussed on cybercriminal forums, allow even novices to produce realistic voice and video impersonations. These incidents highlight the growing sophistication of deepfake attacks and the need for vigilant, adaptive cybersecurity defenses.

Deepfakes are increasingly discussed as a method to circumvent “Know Your Customer” (KYC) processes, with cybercriminals sharing tutorials and seeking services from skilled creators to facilitate fraud.

External case studies show successful and attempted deepfake attacks on organizations, including a $25 million heist through a deepfake video call and various impersonation attempts via WhatsApp and video conferencing platforms.

The increasing sophistication of deepfake attacks highlights how threat actors are conducting extensive research and using publicly available content to craft realistic impersonations, enhancing the effectiveness of social engineering scams.

AI-Enhanced Scripting

Security researchers report that advanced persistent threat (APT) and nation-state actors are employing LLMs to enhance their script generation capabilities, making their operations more efficient and harder to detect. While these actors aren’t solely relying on AI, they’re using it to automate tasks such as renaming variables, restructuring code, and encoding strings, as seen with groups like Russia’s “Forest Blizzard,” North Korea’s “Emerald Sleet,” and Iran’s “Crimson Sandstorm.” ReliaQuest’s investigations revealed that cybercriminals are using platforms like FlowGPT to generate malicious scripts, exemplified by ChaosGPT creating exploits upon request. Experiments demonstrated that LLMs can quickly produce obfuscated scripts and automate tasks like identifying user logon events, underscoring the potential for AI to significantly augment cyber threats by reducing the time and effort required to generate and refine malicious code.

Experiments with LLMs like Mixtral-8x7B-T yielded working PowerShell scripts for identifying user logon events and deploying files across endpoints, highlighting the ease of generating functional scripts that can be refined for cyber operations.

Reports indicate that groups like “Scattered Spider” have used LLMs like “Llama 2 70B” to generate scripts for malicious activities, such as downloading user credentials during intrusions.

Investigations show that cybercriminals are testing LLMs for generating malicious scripts and exploit codes, as evidenced by interactions on platforms like FlowGPT where users request and receive exploit scripts from models like ChaosGPT.

The Takeaway

AI and LLMs have significantly augmented cybercriminal operations. The rapid advancement of these technologies, along with their growing adoption by cybercriminals, presents new challenges for organizations. While AI itself does not introduce new attack techniques, it accelerates the speed of attacks through automation and enables larger-scale operations. Diving deep into the threats posed by LLMs against organizations, conducting experiments, and utilizing case studies, the report provides a better understanding of the threats posed by AI. Understanding the current capabilities and limitations of AI in the context of cybercrime, stakeholders can better defend against sophisticated adversaries and protect their digital assets.

ReliaQuest’s research is focused on providing organizations with the essential knowledge and strategies to anticipate and counter cyber risks. This dedication aligns with ReliaQuest’s mission to help organizations increase visibility, reduce complexity, and manage risk, thereby significantly reducing the impact of cyber threats on global security.